Many developers got used to this: Plug in a JTAG connector to your embedded target and debug the misbehaviour down to the hardware. No longer a luxury, isn’t it?

When you move on to a soft core from opencores.org for a simple SoC solution on FPGA, it may be. Most of them don’t have a TAP – a Test Access Port – like other off the shelf ARM cores.

We had pimped a ZPU soft core with in circuit emulation features a while ago (In Circuit Emulation for the ZPU). This enabled us to connect to a hardware ZPU core with the GNU debugger like we would attach to for example a Blackfin, msp430, you name it.

Coming across various MIPS compatible IP cores without debug facilities, the urge came up to create a standard test access port that would work on this architecture as well. There is an existing Debug standard called EJTAG, but it turned out way more complex to implement than simple In Circuit Emulation (ICE) using the above TAP from the ZPU.

So we would like to do the same as with the ZPU:

- Compile programs with the <arch>-elf-gcc

- Download programs into the FPGA hardware using GDB

- set breakpoints, inspect and manipulate values and registers, etc.

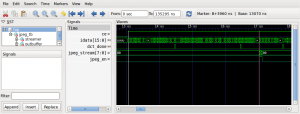

- Run a little “chip scope”

Would that work without killer efforts?

Yes, it would. But we’re not releasing the white rabbit yet. Stay tuned for embedded world 2013 in Nuremberg in February. We (Rene Doß from Dossmatik GmbH Germany and myself) are going to show some interesting stuff that will boost your SoC development big time by using known architectures and debug tools.

His MIPS compatible Mais CPU is on the way to become stable, and turns out to be a quick bastard while being stingy on resources.

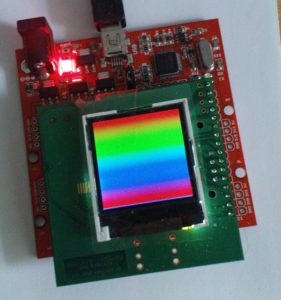

For a sneak peek, here’s some candy from the synthesizer (including I/O for the LCD as shown below):

Selected Device : 3s250evq100-5 Number of Slices: 1172 out of 2448 47% Number of Slice Flip Flops: 904 out of 4896 18% Number of 4 input LUTs: 2230 out of 4896 45% Number of IOs: 15 Number of bonded IOBs: 15 out of 66 22% Number of BRAMs: 2 out of 12 16% Number of GCLKs: 3 out of 24 12%

And for the timing:

Minimum period: 13.498ns (Maximum Frequency: 74.084MHz)

Update: Here’s a link to the presentation (as PDF) given on the embedded world 2013 in Nuremberg: