This is a short howto to get a Linux specific Virtual Chip running on a Windows OS:

- Download and install the Docker Toolbox

- Download and install the Xming X-Server

- Run ‘Docker Quickstart terminal’, normally installed on your desktop. Be patient, the environment takes some time to start up

- Download MaSoCist docker container:

docker pull hackfin/masocist

- Optional: (GTKwave support): Prepare the Xming server by running XLaunch and configuring as follows using the Wizard:

- Multiple Windows

- Start no client

- No access control selected (Warning, this could cause security issues, depending on your system config)

- Start the container using the script below

docker run -ti --rm -u masocist -w /home/masocist -e DISPLAY=192.168.99.1:0 \ -v /tmp/.X11-unix:/tmp/.X11-unix hackfin/masocist bash

You might save this script to a file like run.sh and start it next time from the Docker Quickstart terminal:

. run.sh

Depending on the MaSoCist release you’ve got, there are different install methods:

Opensource github

See README.md for most up to date build notes. GTKwave is not installed by default. Run

sudo apt-get update gtkwave

to install it.

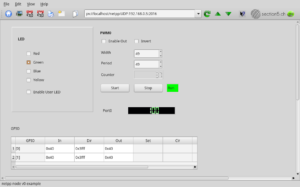

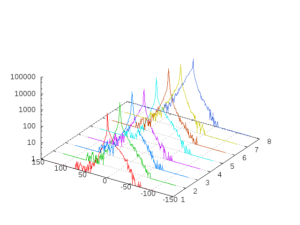

When you have X support enabled, you can, after building a virtual board, run

make -C sim run

from the MaSoCist directory to start the interactive wave display simulation. If the board uses the virtual UART, you can now also connect to /tmp/virtualcom using minicom.

Custom ‘vendor’ license

You should have received a vendor-<YOUR_ORGANIZATION>.pdf for detailed setup notes, see ‘Quick start’ section.

A few more notes

- All changes you will make to this docker container are void on exit. If this is not desired, remove the ‘–rm’ option and use the ‘docker ps -a’ and ‘docker start -i <container_id>’ commands to reenter your container. Consult the Docker documentation for details.

- Closing the GTKwave window will not stop the simulation!

- Ctrl-C on the console stops the simulation, but does not close the wave window

- The UART output of the virtual SoC is printed on the console (“Hello!”). Virtual UART input is not supported on this system, but can be implemented using tools supporting virtual COM ports and Windows pipes.

- Once you have the Docker container imported, you can alternatively use the Kitematic GUI and apply the above options, in particular:

- -v: Volume mounts to /tmp/.X11-unix

- -e: DISPLAY environment setting