For a while I had been messing with various DSP architectures while playing with FPGA technology. So far both worlds were kinda separated in its own sandbox, the FPGA got to do really stupid interfacing and simple transforms while the DSP was doing the real complex encoding.

Now it’s time for a leap: Why not move some often used DSP primitives smoothly into the FPGA?

What kept me from doing it, were the tools. Most time is actually not burned on the concept or implementation, but on the debugging. Since the tools helping to debug would cost a fortune, it was just more economic to put a powerful chip next to the FPGA, even if it would have had the resources to run a decent number of soft cores in parallel.

Well, turns out somehow that in the development process of the past years, own tools can do. The ghdl extensions described here, allow to verify processing chains with real data just by replacing a hard VHDL FIFO module for data input by its virtual counterpart. This VirtualFIFO just runs in the simulation and can be fed from outside (via network) with data.

One good example of a complex processing chain is a JPEG encoder, which is typically implemented in software, i.e. as a serial procedure running on a CPU. If you’d want to migrate parts of the encoding such as the computationally expensive DCT into real parallel working hardware, the classical way could be to produce some number of offline data sets to run through the testbench (simulation) to verify the correct behaviour of your design.

But you could also just loop in the simulation into your software, in the sense of a “Software in the Loop” co-simulation.

Hacking the JPEG encoder

So, to replace the DCT of a JPEG routine using our DCT hardware simulation, we have to loop in some piece of code that implements our virtual DCT. We call it “remote DCT”, because the simulation could run on another machine. To speak to the remote DCT, we use the VirtualFIFO, which has a netpp interface, meaning, it can be accessed from the network.

The following python script demonstrates, how the remote DCT is accessed:

import netpp

d = netpp.connect("TCP:localhost")

r = d.sync() buf = r.Fifo.Buffer b = get_next_buffer() # Example function returning a buffer buf.set(b) # Send buffer b rb = buf.get() # Get return buffer

The C version of this looks a bit more complicated, but basically makes API calls to netpp to transfer the data and wait for the return. This is simply the concept of swapping out local functions against remote procedure calls that are answered by the VHDL simulation.

This way, we run our JPEG encoder with a black and white test PNG shown below:

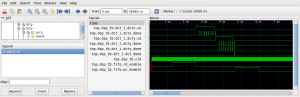

Since the DCT is running in a hardware simulation and not in software, it is very slow. It can take minutes, to encode an image. However, what we can get from this simulation, is a cycle accurate waveform of things that are happening under the hood.

After many hours of debugging and fixing some data flow issues, our virtual hardware does what it should.

And this is the encoded result. By swapping out the remoteDCT routine again by the built in routine, we get a reference image which we can subtract from the virtually hardware encoded image. If the result is all zeros, we know that both methods produce identical results.

Synthesis

Now the interesting part: How much hardware resources are allocated? See the synthesis results output below (This is for a Xilinx Spartan3E 250).

Typically, the timing results from synthesis can’t be trusted, when place & route has completed and fitted other logic as well, the maximum clock will significantly decrease. Since this design is using up all DSP slices on this FPGA, we’ll only see this as an intermediate station and move on to more gates and DSP power.

Number of Slices: 872 out of 2448 35% Number of Slice Flip Flops: 623 out of 4896 12% Number of 4 input LUTs: 1668 out of 4896 34% Number of IOs: 39 Number of bonded IOBs: 38 out of 66 57% Number of BRAMs: 6 out of 12 50% Number of MULT18X18SIOs: 12 out of 12 100% Timing constraint: Default period analysis for Clock 'clk' Clock period: 6.710ns (frequency: 149.032MHz)